|

If you use Mac OS X as your platform for development work, then you may be interested to know how easy it is to use Apache Cassandra on the Mac. The following shows you how to download and setup Cassandra, its utilities, and also use DataStax OpsCenter, which is a browser-based, visual management and monitoring tool for Cassandra.

Download the SoftwareDownload Hadoop For Windows

DataStax makes available the DataStax Community Edition, which contains the latest community version of Apache Cassandra, along with the Cassandra Query Language (CQL) utility, and a free edition of DataStax OpsCenter. To get Datastax Community Edition, go to Planet Cassandra and download both Cassandra and OpsCenter, and select the tar downloads of both the DataStax Community Server and OpsCenter. You can also use the

curl command on Mac to directly download the files to your machine. For example, to download the DataStax Community Server, you could enter the following at terminal prompt: curl -OL http://downloads.datastax.com/community/dsc.tar.gz

Install Cassandra

Mac & PC Download Trial Screenshots Website Virus Scan Apache Hadoop is an open-source software framework written in Java for distributed storage and distributed processing of very large data sets on computer clusters built from commodity hardware. Installing the Execution Engine for Apache Hadoop service involves a two-step process. The service must be installed on an Apache Hadoop cluster after the administrator installs the service on IBM Cloud Pak for Data. Required role: To complete this task, you must be an administrator of the project (namespace) where you will deploy the service. Apache hadoop on mac osx yosemite. 17 April 2015. Download examples. Hadoop examples 1.2.1 (old) hadoop examples 2.6.0 (current) test them out using.

Once your download of Cassandra finishes, move the file to whatever directory you’d like to use for testing Cassandra. Then uncompress the file (whose name will change depending on the version you’re downloading):

Then switch to the new Cassandra bin directory and start up Cassandra:

Now that you have Cassandra running, the next thing to do is connect to the server and begin creating database objects. This is done with the Cassandra Query Language (CQL) utility. CQL is a very SQL-like language that lets you create objects as you’re likely used to doing in the RDBMS world. The CQL utility (cqlsh) is in the same bin directory as the cassandra executable:

Apache Hadoop Download For Mac Windows 10

[cqlsh 2.3.0 | Cassandra 1.2.2 | CQL spec 3.0.0 | Thrift protocol 19.35.0]

Cassandra has the concept of a keyspace, which is similar to a database in a RDBMS. A keyspace holds data objects and is the level where you specify options for a data partitioning and replication strategy. For this brief introduction, we’ll just create a basic keyspace to hold some example data objects we’ll create:

Now that you have a keyspace created, it’s time to create a data object to store data. Because Cassandra is based on Google Bigtable, you’ll use column families /tables to store data. Tables in Cassandra are similar to RDBMS tables, but are much more flexible and dynamic. Cassandra tables have rows like RDBMS tables, but they are a sparse column type of object, meaning that rows in a column family can have different columns depending on the data you want to store for a particular row. Let’s create a base table to hold employee data:

The column family is named emp and contains four columns, including the employee ID, which acts as the primary key of the table. Note that a column family must have a primary key that’s used for initial query activity. Let’s now go ahead and insert data into our new column family using the CQL INSERT command:

Notice how Cassandra’s CQL is literally identical to the RDBMS INSERT command. Other DML statements are as well:

Querying data uses the familiar SELECT statement:

However, look what happens when you try to use a WHERE predicate and reference a non-primary key column:

In Cassandra, if you want to query columns other than the primary key, you need to create a secondary index on them:

Installing and using DataStax OpsCenter

Installing DataStax OpsCenter on Mac involves working through the following steps in a terminal window:

Conclusion

That’s it – you’ve now got Cassandra and DataStax OpsCenter installed and running on your Mac. For other software such as various application drivers and client libraries, visit the DataStax downloads page.

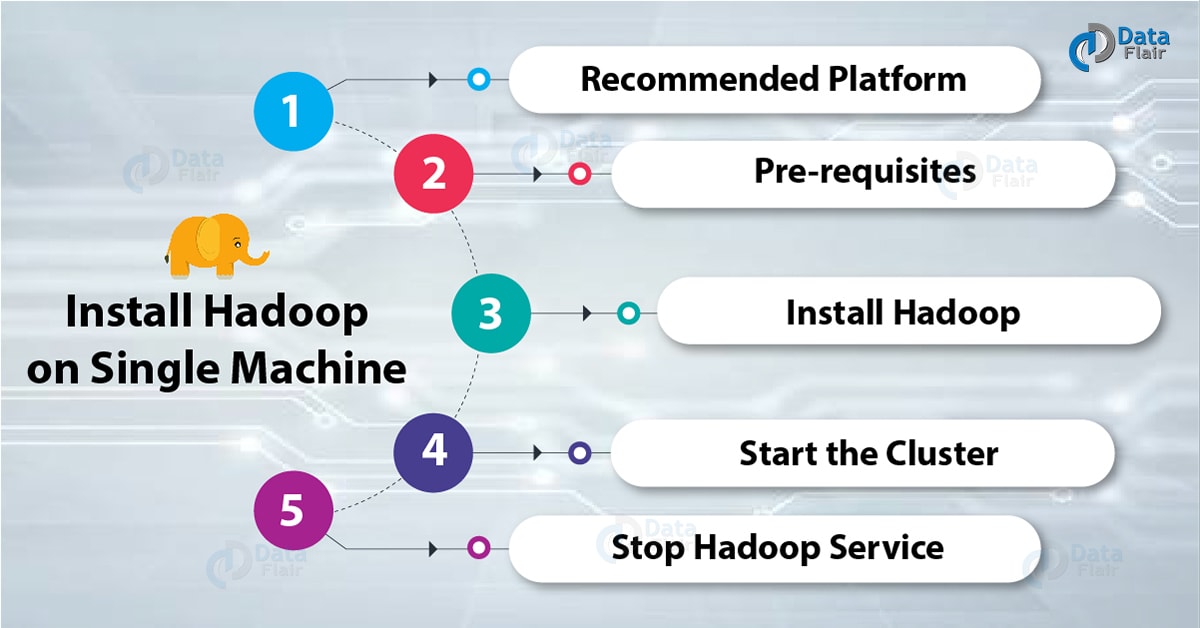

Here are the step by step instructions to install the plain vanilla Apache Hadoop and run a rudimentary map reduce job on the mac osx operating system.

There are excellent tutorials and instructions to do this on the web. Two in particular are the one from apache and another from Michael Noll. These two resources are more catered towards a linux/ubuntu flavored audience.

Before you begin, make sure that you have Java 6 or better installed on your mac.You can download the hadoop distro from the apache site, but the preferred way to download it is using homebrew on mac. The version I downloaded using brew was hadoop 1.2.1, the most current stable hadoop release. Its as easy as typing the command “brew install hadoop”. It will be downloaded and placed under /usr/local/Cellar by default in a directory called hadoop.

There are 3 modes of running hadoop — stand alone, pseudo distributed and fully distributed. The standalone is fairly straightforward and by following the apache page you can easily run it. Setting up a fully distributed cluster is pretty complex and beyond the scope of this introductory blog on hadoop. The setup that we are going to look is the pseudo distributed mode which is particularly useful as a decent development environment. These instructions are a hybrid of those found on the Apache Hadoop site and the Hadoop chapter in the Spring Data book.

Pseudo distributed mode is basically a single node installation of hadoop, but each individual hadoop daemon runs in individual java processes and thus pseudo distributed.

The stock config files needed for a distributed hadoop installation come empty and you need to populate them with the following. This is well documented on the Apache site and the following is a repeat of what you find there.

conf/core-site.xml:

conf/hdfs-site.xml:

conf/mapred-site.xml:

Next you need to make sure that you can do a passphraseless ssh to localhost. Try the following on a terminal window.

You might hit with two issues. First, it may be likely that your sshd (daemon process for ssh) is not running. In that case, you have to go to System Preferences / Sharing / enable remote login. Make sure that only local users on your system are allowed to ssh to your mac.

Another issue is that your key is not added to the known ssh hosts and it prompts for a passphrase. To avoid this, execute the following two commands on the terminal.

Now you should be able to ssh into localhost.

Since this is your first installation of hadoop you need to format the hadoop filesystem, HDFS. If you already have work under way in HDFS, don’t format HDFS, else you would loose all the data.

Go to where hadoop distribution is installed. If you have done it through brew and downloaded the current latest, it is under /usr/local/Cellar/hadoop/1.2.1.

Now start all the hadoop daemons:

Once everything is started, you can verify them in two ways:

It should print out all the different hadoop daemon processes just started.

You can also inspect the NameNode, JobTracker and TaskTracker web interfaces at the following three URL’s respectively.

Next open a different terminal tab and create a directory in /tmp/gutenberg/download. Project Gutenberg conveniently makes a lot of pre copyright era books available which is convenient for the wordcount map reduce job that is going to run on Hadoop.

On the Mac, you can use the curl command to download the file like the following:

This would download a file called pg4363.txt

Hadoop Client Download Apache Hadoop Download For Mac Download

Next we need to bring this file into HDFS using the hadoop dfs command.

The following command assumes that you have downloaded and installed hadoop using brew or have the hadoop executable directory is on your system path.

Install Apache Hadoop On Windows

We can confirm the file is copied to HDFS by using the following command.

Hadoop distribution comes with a jar file using which we can execute various map reduce jobs. We are going to execute the word count job on this file that we just copied into HDFS.

This would produce a lot of outputs on the terminal from the logging of the map reduce job.

Once completed you can go to the jobtracker UI at http://localhost:50030/ to see the job that was just run.

The output was generated in the directory /user/gutenberg/output.

We are particularly interested in the file part-r-00000 where the actual output was written. We can see the contents of the file like the following:

Or we can bring this file to our local file system as shown below:

Make sure that you give the whole HDFS output directory as input for the local file system move as it needs to combine files in case of multiple output files.

Thats it!! We have successfully run a simple word count map reduce job on a single node pseudo distributed cluster of hadoop!!

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Search the site...

- Blog

- Home

- Digital Countdown Timer Download Mac

- Gnu C++ Compiler Mac Download

- Download Windows Phone To Mac

- Kontakt 5 Download Free Mac

- Battlefield Play4free For Mac Download

- Download Planner Plus For Mac

- Donut County Free Download Mac

- Adobe Illustrator 2018 Mac Download

- Bs Player Download Per Mac

- Download Chrome Version 73 Mac

- Alice Greenfingers 2 Mac Download

- Find My Mac Download Free

- Sap Gui 750 Mac Download

- Blog

- Home

- Digital Countdown Timer Download Mac

- Gnu C++ Compiler Mac Download

- Download Windows Phone To Mac

- Kontakt 5 Download Free Mac

- Battlefield Play4free For Mac Download

- Download Planner Plus For Mac

- Donut County Free Download Mac

- Adobe Illustrator 2018 Mac Download

- Bs Player Download Per Mac

- Download Chrome Version 73 Mac

- Alice Greenfingers 2 Mac Download

- Find My Mac Download Free

- Sap Gui 750 Mac Download

RSS Feed

RSS Feed